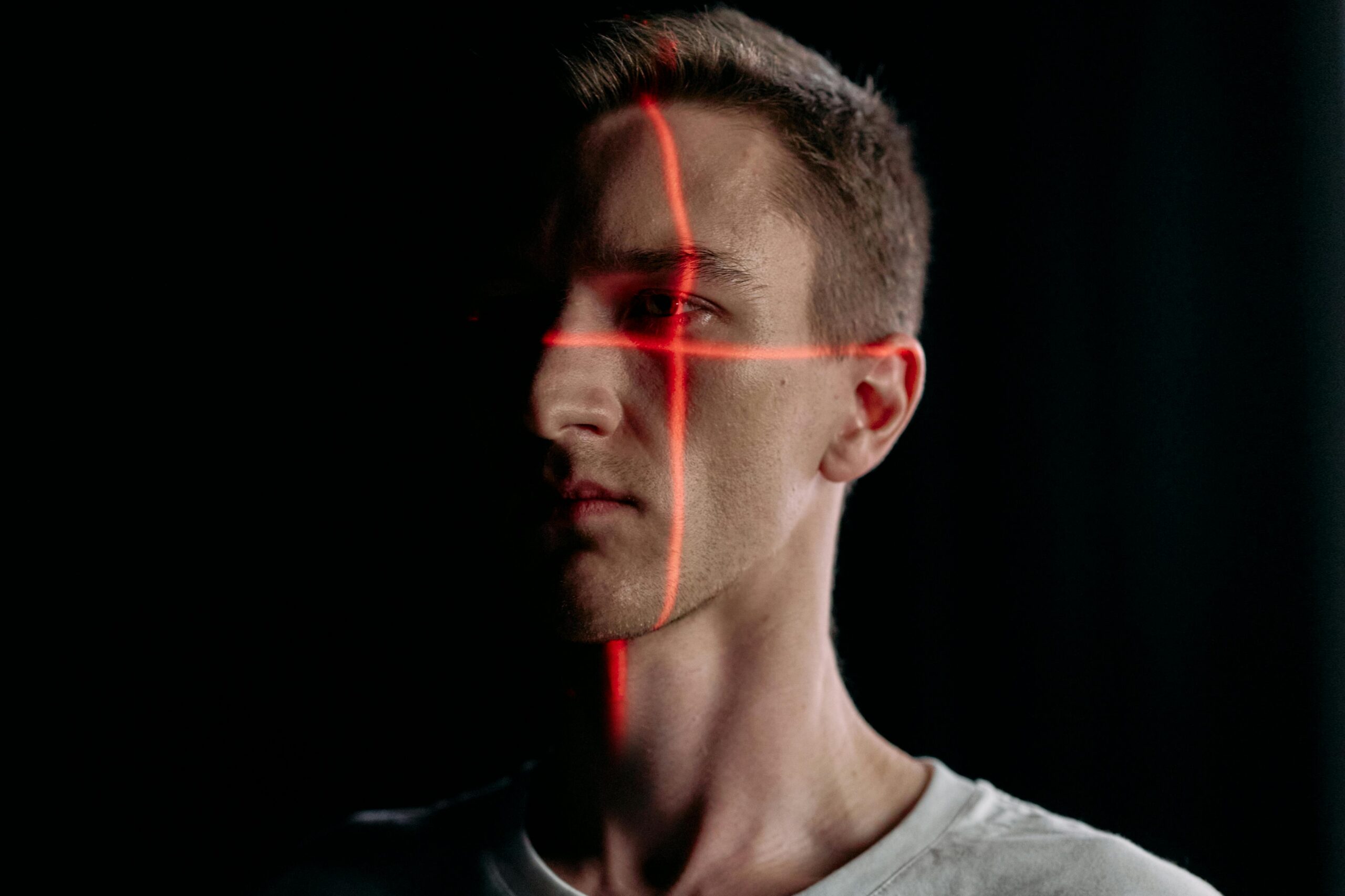

In social entertainment platforms and content-driven internet ecosystems, images and videos have become the primary forms of daily interaction and expression. However, as generative AI technologies continue to mature, the barrier to producing deepfake content has dropped significantly. As a result, large volumes of AI-synthesized facial images and videos are quietly flowing into online platforms, blending into normal content streams.

These contents do not rely on complex technical attacks. Instead, they exploit their highly deceptive nature to be uploaded and disseminated like ordinary content, allowing them to seamlessly infiltrate platforms. This creates entirely new challenges for account security, content compliance, and business risk control.

What Is “Deepfake”?

“Deepfake” refers to synthetic image, audio, or video content generated using artificial intelligence technologies — particularly Generative Adversarial Networks (GANs) and diffusion models. Such content can convincingly transplant a person’s face, voice, or even dynamic expressions onto another individual or scene.

As generative AI becomes increasingly accessible, producing highly realistic facial videos no longer requires professional equipment. It has become widely available and scalable for mass production.

Introducing “StarScope” — Built to Address This Challenge

In response to the continuous infiltration of deepfake content into real-world business scenarios, we have gradually developed systematic detection capabilities for AI-generated images and videos through years of practice in identity security and risk control.

As such risks have become commonplace across social entertainment, content moderation, and multi-channel identity data scenarios, fragmented deployment of detection capabilities can no longer meet business demands. Based on this reality, we consolidated our existing capabilities into a structured, service-oriented offering, forming an independently deployable deepfake detection service — StarScope.

StarScope does not rely on any specific facial capture workflow, nor is it limited to a particular identity verification system. Whether content originates from user uploads or from facial images and videos collected across different platforms and channels, all can be analyzed by StarScope for deepfake detection.

StarScope Focuses on Whether the Content Itself Is AI-Generated

Unlike traditional facial recognition or identity verification systems, StarScope does not determine whether an identity is authentic. Instead, it addresses a more fundamental question:

Does the current image or video contain risks of AI synthesis, manipulation, or generation?

Based on multimodal detection algorithms and continuously accumulated real attack samples, StarScope performs deepfake analysis on input images or videos and outputs clear detection results along with a risk score.

The risk score represents the probability of deepfake manipulation — the higher the score, the greater the risk — allowing enterprises to directly integrate results into their existing content moderation, risk control, or security systems.

Practical Applications Across Multiple Business Scenarios

In social entertainment and content platforms, StarScope can be used to detect AI-generated facial materials in user-uploaded avatars, photos, or videos, helping reduce risks such as mass account registration, identity impersonation, and gray-market infiltration.

In financial or funding-related scenarios, StarScope can provide unified deepfake detection for facial images collected from multiple channels. Regardless of whether the images are captured by the same system, StarScope can serve as an independent authenticity verification layer, enhancing overall data credibility.

For clients who do not use the FinAuth facial liveness detection solution, StarScope can also directly integrate with existing facial recognition platforms or content systems, performing secondary deepfake detection on already-collected images or videos as a supplementary risk control capability.

Deepfake Detection Is Becoming a Foundational Platform Capability

As AI-generated content continues to increase within business traffic flows, determining whether images and videos themselves are authentic has become a foundational condition affecting business security.

Whether content originates from user uploads, external channels, or existing data assets, enterprises require an independent and reusable mechanism to conduct unified deepfake detection and risk assessment.

StarScope is built around this demand, providing stable and directly integrable deepfake detection services for a wide range of business scenarios.

We welcome you to contact us to learn more and experience the deepfake detection capabilities of StarScope.